+  +

+

+ +

+  +

+

+ +

+

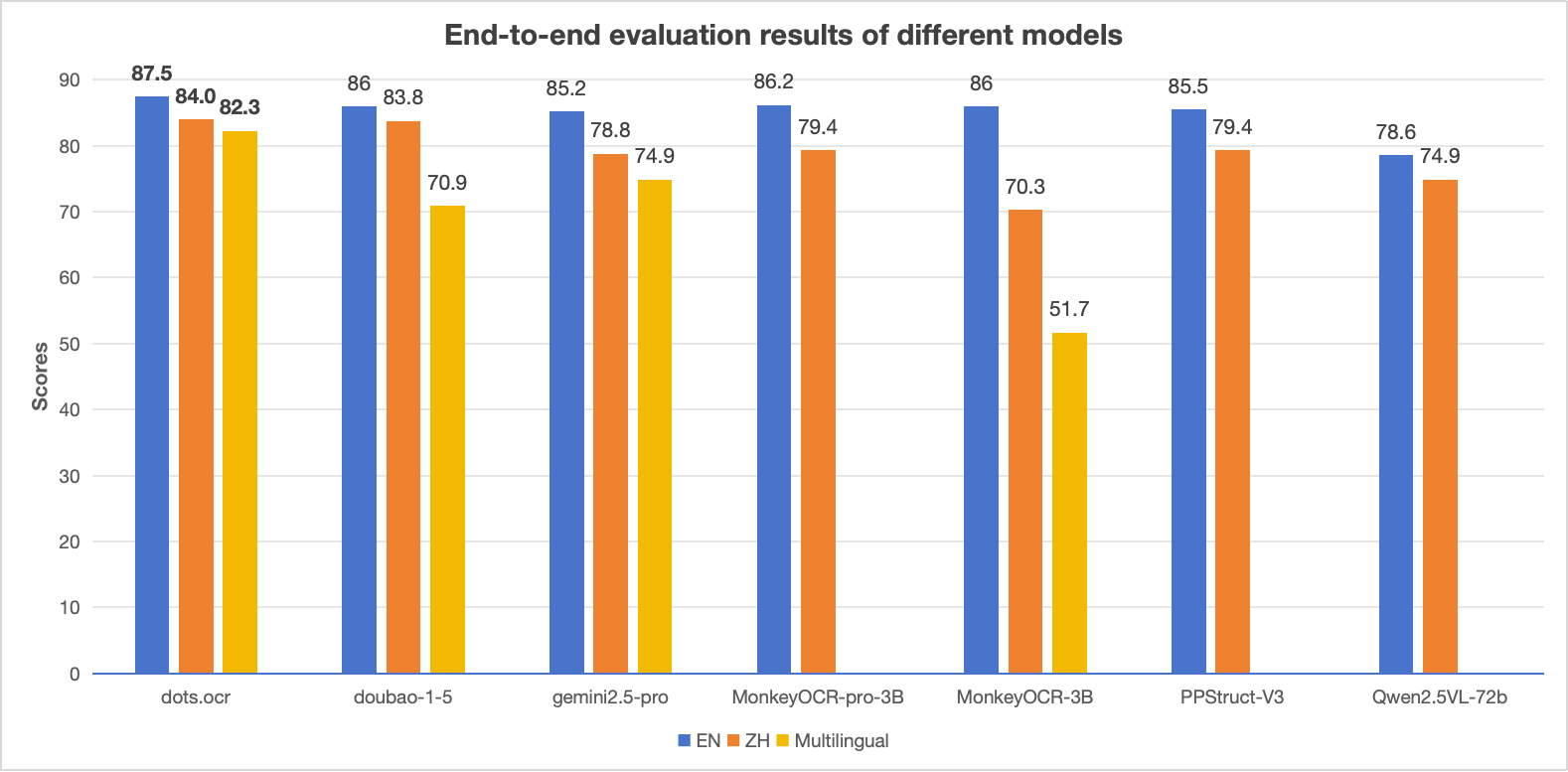

+> **Notes:**

+> - The EN, ZH metrics are the end2end evaluation results of [OmniDocBench](https://github.com/opendatalab/OmniDocBench), and Multilingual metric is the end2end evaluation results of dots.ocr-bench.

+

+

+## News

+* ```2025.07.30 ``` 🚀 We release [dots.ocr](https://github.com/rednote-hilab/dots.ocr), — a multilingual documents parsing model based on 1.7b llm, with SOTA performance.

+

+

+

+## Benchmark Results

+

+### 1. OmniDocBench

+

+#### The end-to-end evaluation results of different tasks.

+

+

+

+> **Notes:**

+> - The EN, ZH metrics are the end2end evaluation results of [OmniDocBench](https://github.com/opendatalab/OmniDocBench), and Multilingual metric is the end2end evaluation results of dots.ocr-bench.

+

+

+## News

+* ```2025.07.30 ``` 🚀 We release [dots.ocr](https://github.com/rednote-hilab/dots.ocr), — a multilingual documents parsing model based on 1.7b llm, with SOTA performance.

+

+

+

+## Benchmark Results

+

+### 1. OmniDocBench

+

+#### The end-to-end evaluation results of different tasks.

+

+| Model Type |

+Methods | +OverallEdit↓ | +TextEdit↓ | +FormulaEdit↓ | +TableTEDS↑ | +TableEdit↓ | +Read OrderEdit↓ | +||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| EN | +ZH | +EN | +ZH | +EN | +ZH | +EN | +ZH | +EN | +ZH | +EN | +ZH | +||

| Pipeline Tools |

+MinerU | +0.150 | +0.357 | +0.061 | +0.215 | +0.278 | +0.577 | +78.6 | +62.1 | +0.180 | +0.344 | +0.079 | +0.292 | +

| Marker | +0.336 | +0.556 | +0.080 | +0.315 | +0.530 | +0.883 | +67.6 | +49.2 | +0.619 | +0.685 | +0.114 | +0.340 | +|

| Mathpix | +0.191 | +0.365 | +0.105 | +0.384 | +0.306 | +0.454 | +77.0 | +67.1 | +0.243 | +0.320 | +0.108 | +0.304 | +|

| Docling | +0.589 | +0.909 | +0.416 | +0.987 | +0.999 | +1 | +61.3 | +25.0 | +0.627 | +0.810 | +0.313 | +0.837 | +|

| Pix2Text | +0.320 | +0.528 | +0.138 | +0.356 | +0.276 | +0.611 | +73.6 | +66.2 | +0.584 | +0.645 | +0.281 | +0.499 | +|

| Unstructured | +0.586 | +0.716 | +0.198 | +0.481 | +0.999 | +1 | +0 | +0.06 | +1 | +0.998 | +0.145 | +0.387 | +|

| OpenParse | +0.646 | +0.814 | +0.681 | +0.974 | +0.996 | +1 | +64.8 | +27.5 | +0.284 | +0.639 | +0.595 | +0.641 | +|

| PPStruct-V3 | +0.145 | +0.206 | +0.058 | +0.088 | +0.295 | +0.535 | +- | +- | +0.159 | +0.109 | +0.069 | +0.091 | +|

| Expert VLMs |

+GOT-OCR | +0.287 | +0.411 | +0.189 | +0.315 | +0.360 | +0.528 | +53.2 | +47.2 | +0.459 | +0.520 | +0.141 | +0.280 | +

| Nougat | +0.452 | +0.973 | +0.365 | +0.998 | +0.488 | +0.941 | +39.9 | +0 | +0.572 | +1.000 | +0.382 | +0.954 | +|

| Mistral OCR | +0.268 | +0.439 | +0.072 | +0.325 | +0.318 | +0.495 | +75.8 | +63.6 | +0.600 | +0.650 | +0.083 | +0.284 | +|

| OLMOCR-sglang | +0.326 | +0.469 | +0.097 | +0.293 | +0.455 | +0.655 | +68.1 | +61.3 | +0.608 | +0.652 | +0.145 | +0.277 | +|

| SmolDocling-256M | +0.493 | +0.816 | +0.262 | +0.838 | +0.753 | +0.997 | +44.9 | +16.5 | +0.729 | +0.907 | +0.227 | +0.522 | +|

| Dolphin | +0.206 | +0.306 | +0.107 | +0.197 | +0.447 | +0.580 | +77.3 | +67.2 | +0.180 | +0.285 | +0.091 | +0.162 | +|

| MinerU 2 | +0.139 | +0.240 | +0.047 | +0.109 | +0.297 | +0.536 | +82.5 | +79.0 | +0.141 | +0.195 | +0.069< | +0.118 | +|

| OCRFlux | +0.195 | +0.281 | +0.064 | +0.183 | +0.379 | +0.613 | +71.6 | +81.3 | +0.253 | +0.139 | +0.086 | +0.187 | +|

| MonkeyOCR-pro-3B | +0.138 | +0.206 | +0.067 | +0.107 | +0.246 | +0.421 | +81.5 | +87.5 | +0.139 | +0.111 | +0.100 | +0.185 | +|

| General VLMs |

+GPT4o | +0.233 | +0.399 | +0.144 | +0.409 | +0.425 | +0.606 | +72.0 | +62.9 | +0.234 | +0.329 | +0.128 | +0.251 | +

| Qwen2-VL-72B | +0.252 | +0.327 | +0.096 | +0.218 | +0.404 | +0.487 | +76.8 | +76.4 | +0.387 | +0.408 | +0.119 | +0.193 | +|

| Qwen2.5-VL-72B | +0.214 | +0.261 | +0.092 | +0.18 | +0.315 | +0.434 | +82.9 | +83.9 | +0.341 | +0.262 | +0.106 | +0.168 | +|

| Gemini2.5-Pro | +0.148 | +0.212 | +0.055 | +0.168 | +0.356 | +0.439 | +85.8 | +86.4 | +0.13 | +0.119 | +0.049 | +0.121 | +|

| doubao-1-5-thinking-vision-pro-250428 | +0.140 | +0.162 | +0.043 | +0.085 | +0.295 | +0.384 | +83.3 | +89.3 | +0.165 | +0.085 | +0.058 | +0.094 | +|

| Expert VLMs | +dots.ocr | +0.125 | +0.160 | +0.032 | +0.066 | +0.329 | +0.416 | +88.6 | +89.0 | +0.099 | +0.092 | +0.040 | +0.067 | +

| Model Type |

+Models | +Book | +Slides | +Financial Report |

+Textbook | +Exam Paper |

+Magazine | +Academic Papers |

+Notes | +Newspaper | +Overall | +

|---|---|---|---|---|---|---|---|---|---|---|---|

| Pipeline Tools |

+MinerU | +0.055 | +0.124 | +0.033 | +0.102 | +0.159 | +0.072 | +0.025 | +0.984 | +0.171 | +0.206 | +

| Marker | +0.074 | +0.340 | +0.089 | +0.319 | +0.452 | +0.153 | +0.059 | +0.651 | +0.192 | +0.274 | +|

| Mathpix | +0.131 | +0.220 | +0.202 | +0.216 | +0.278 | +0.147 | +0.091 | +0.634 | +0.690 | +0.300 | +|

| Expert VLMs |

+GOT-OCR | +0.111 | +0.222 | +0.067 | +0.132 | +0.204 | +0.198 | +0.179 | +0.388 | +0.771 | +0.267 | +

| Nougat | +0.734 | +0.958 | +1.000 | +0.820 | +0.930 | +0.830 | +0.214 | +0.991 | +0.871 | +0.806 | +|

| Dolphin | +0.091 | +0.131 | +0.057 | +0.146 | +0.231 | +0.121 | +0.074 | +0.363 | +0.307 | +0.177 | +|

| OCRFlux | +0.068 | +0.125 | +0.092 | +0.102 | +0.119 | +0.083 | +0.047 | +0.223 | +0.536 | +0.149 | +|

| MonkeyOCR-pro-3B | +0.084 | +0.129 | +0.060 | +0.090 | +0.107 | +0.073 | +0.050 | +0.171 | +0.107 | +0.100 | +|

| General VLMs |

+GPT4o | +0.157 | +0.163 | +0.348 | +0.187 | +0.281 | +0.173 | +0.146 | +0.607 | +0.751 | +0.316 | +

| Qwen2.5-VL-7B | +0.148 | +0.053 | +0.111 | +0.137 | +0.189 | +0.117 | +0.134 | +0.204 | +0.706 | +0.205 | +|

| InternVL3-8B | +0.163 | +0.056 | +0.107 | +0.109 | +0.129 | +0.100 | +0.159 | +0.150 | +0.681 | +0.188 | +|

| doubao-1-5-thinking-vision-pro-250428 | +0.048 | +0.048 | +0.024 | +0.062 | +0.085 | +0.051 | +0.039 | +0.096 | +0.181 | +0.073 | +|

| Expert VLMs | +dots.ocr | +0.031 | +0.047 | +0.011 | +0.082 | +0.079 | +0.028 | +0.029 | +0.109 | +0.056 | +0.055 | +

| Methods | +OverallEdit↓ | +TextEdit↓ | +FormulaEdit↓ | +TableTEDS↑ | +TableEdit↓ | +Read OrderEdit↓ | +MonkeyOCR-3B | +0.483 | +0.445 | +0.627 | +50.93 | +0.452 | +0.409 | + +

|---|---|---|---|---|---|---|

| doubao-1-5-thinking-vision-pro-250428 | +0.291 | +0.226 | +0.440 | +71.2 | +0.260 | +0.238 | +

| doubao-1-6 | +0.299 | +0.270 | +0.417 | +71.0 | +0.258 | +0.253 | +

| Gemini2.5-Pro | +0.251 | +0.163 | +0.402 | +77.1 | +0.236 | +0.202 | +

| dots.ocr | +0.177 | +0.075 | +0.297 | +79.2 | +0.186 | +0.152 | +

| Method | +F1@IoU=.50:.05:.95↑ | +F1@IoU=.50↑ | +||||||||

|---|---|---|---|---|---|---|---|---|---|---|

| Overall | +Text | +Formula | +Table | +Picture | +Overall | +Text | +Formula | +Table | +Picture | +DocLayout-YOLO-DocStructBench | +0.733 | +0.694 | +0.480 | +0.803 | +0.619 | +0.806 | +0.779 | +0.620 | +0.858 | +0.678 | + + +

| dots.ocr-parse all | +0.831 | +0.801 | +0.654 | +0.838 | +0.748 | +0.922 | +0.909 | +0.770 | +0.888 | +0.831 | +

| dots.ocr-detection only | +0.845 | +0.816 | +0.716 | +0.875 | +0.765 | +0.930 | +0.917 | +0.832 | +0.918 | +0.843 | +

| Model | +ArXiv | +Old Scans Math |

+Tables | +Old Scans | +Headers and Footers |

+Multi column |

+Long Tiny Text |

+Base | +Overall | +

|---|---|---|---|---|---|---|---|---|---|

| GOT OCR | +52.7 | +52.0 | +0.2 | +22.1 | +93.6 | +42.0 | +29.9 | +94.0 | +48.3 ± 1.1 | +

| Marker | +76.0 | +57.9 | +57.6 | +27.8 | +84.9 | +72.9 | +84.6 | +99.1 | +70.1 ± 1.1 | +

| MinerU | +75.4 | +47.4 | +60.9 | +17.3 | +96.6 | +59.0 | +39.1 | +96.6 | +61.5 ± 1.1 | +

| Mistral OCR | +77.2 | +67.5 | +60.6 | +29.3 | +93.6 | +71.3 | +77.1 | +99.4 | +72.0 ± 1.1 | +

| Nanonets OCR | +67.0 | +68.6 | +77.7 | +39.5 | +40.7 | +69.9 | +53.4 | +99.3 | +64.5 ± 1.1 | +

| GPT-4o (No Anchor) |

+51.5 | +75.5 | +69.1 | +40.9 | +94.2 | +68.9 | +54.1 | +96.7 | +68.9 ± 1.1 | +

| GPT-4o (Anchored) |

+53.5 | +74.5 | +70.0 | +40.7 | +93.8 | +69.3 | +60.6 | +96.8 | +69.9 ± 1.1 | +

| Gemini Flash 2 (No Anchor) |

+32.1 | +56.3 | +61.4 | +27.8 | +48.0 | +58.7 | +84.4 | +94.0 | +57.8 ± 1.1 | +

| Gemini Flash 2 (Anchored) |

+54.5 | +56.1 | +72.1 | +34.2 | +64.7 | +61.5 | +71.5 | +95.6 | +63.8 ± 1.2 | +

| Qwen 2 VL (No Anchor) |

+19.7 | +31.7 | +24.2 | +17.1 | +88.9 | +8.3 | +6.8 | +55.5 | +31.5 ± 0.9 | +

| Qwen 2.5 VL (No Anchor) |

+63.1 | +65.7 | +67.3 | +38.6 | +73.6 | +68.3 | +49.1 | +98.3 | +65.5 ± 1.2 | +

| olmOCR v0.1.75 (No Anchor) |

+71.5 | +71.4 | +71.4 | +42.8 | +94.1 | +77.7 | +71.0 | +97.8 | +74.7 ± 1.1 | +

| olmOCR v0.1.75 (Anchored) |

+74.9 | +71.2 | +71.0 | +42.2 | +94.5 | +78.3 | +73.3 | +98.3 | +75.5 ± 1.0 | +

| MonkeyOCR-pro-3B | +83.8 | +68.8 | +74.6 | +36.1 | +91.2 | +76.6 | +80.1 | +95.3 | +75.8 ± 1.0 | +

| dots.ocr | +82.1 | +64.2 | +88.3 | +40.9 | +94.1 | +82.4 | +81.2 | +99.5 | +79.1 ± 1.0 | +

如果您是本模型的贡献者,我们邀请您根据模型贡献文档,及时完善模型卡片内容。

\ No newline at end of file + +

+ +

+ +

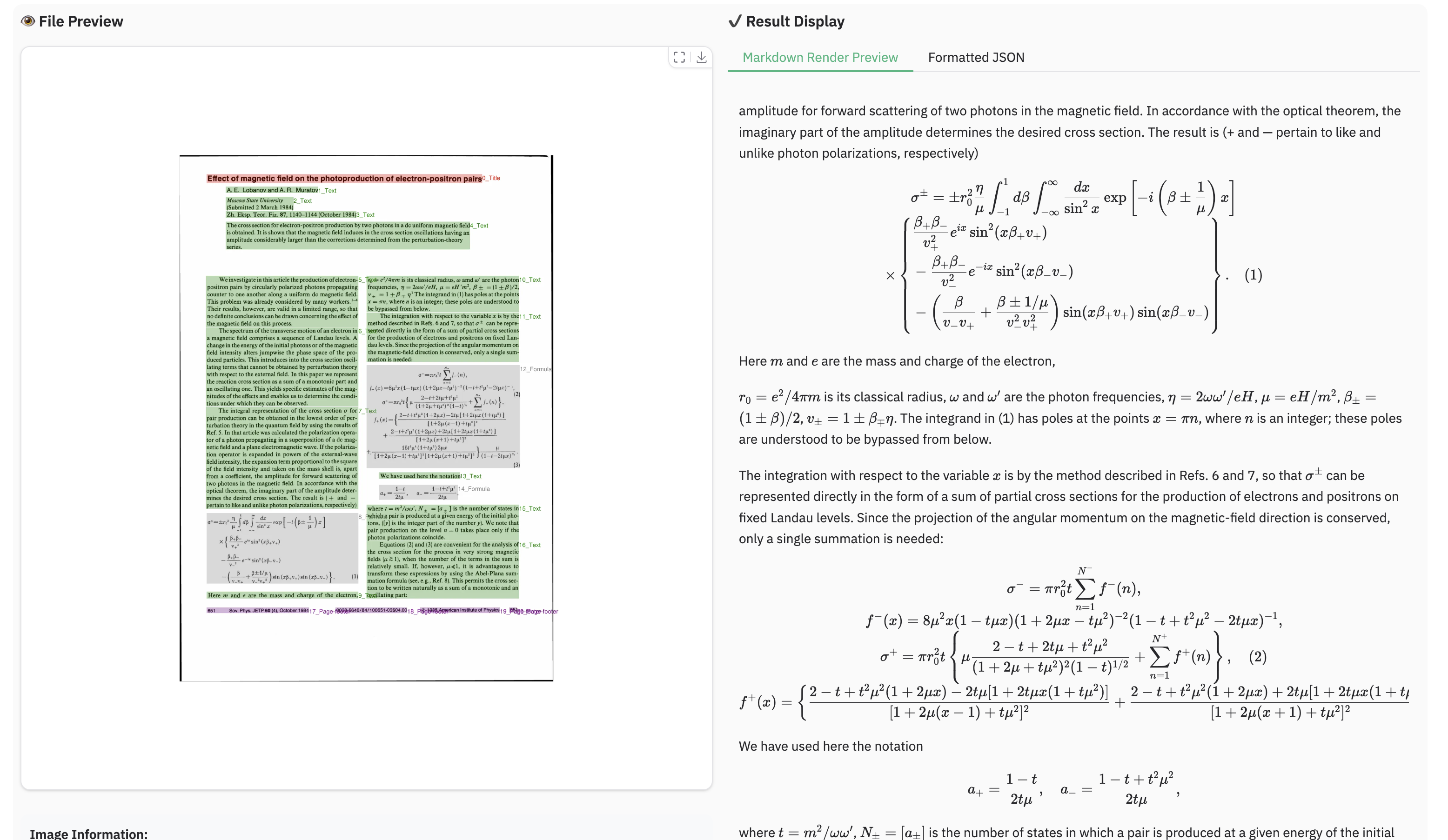

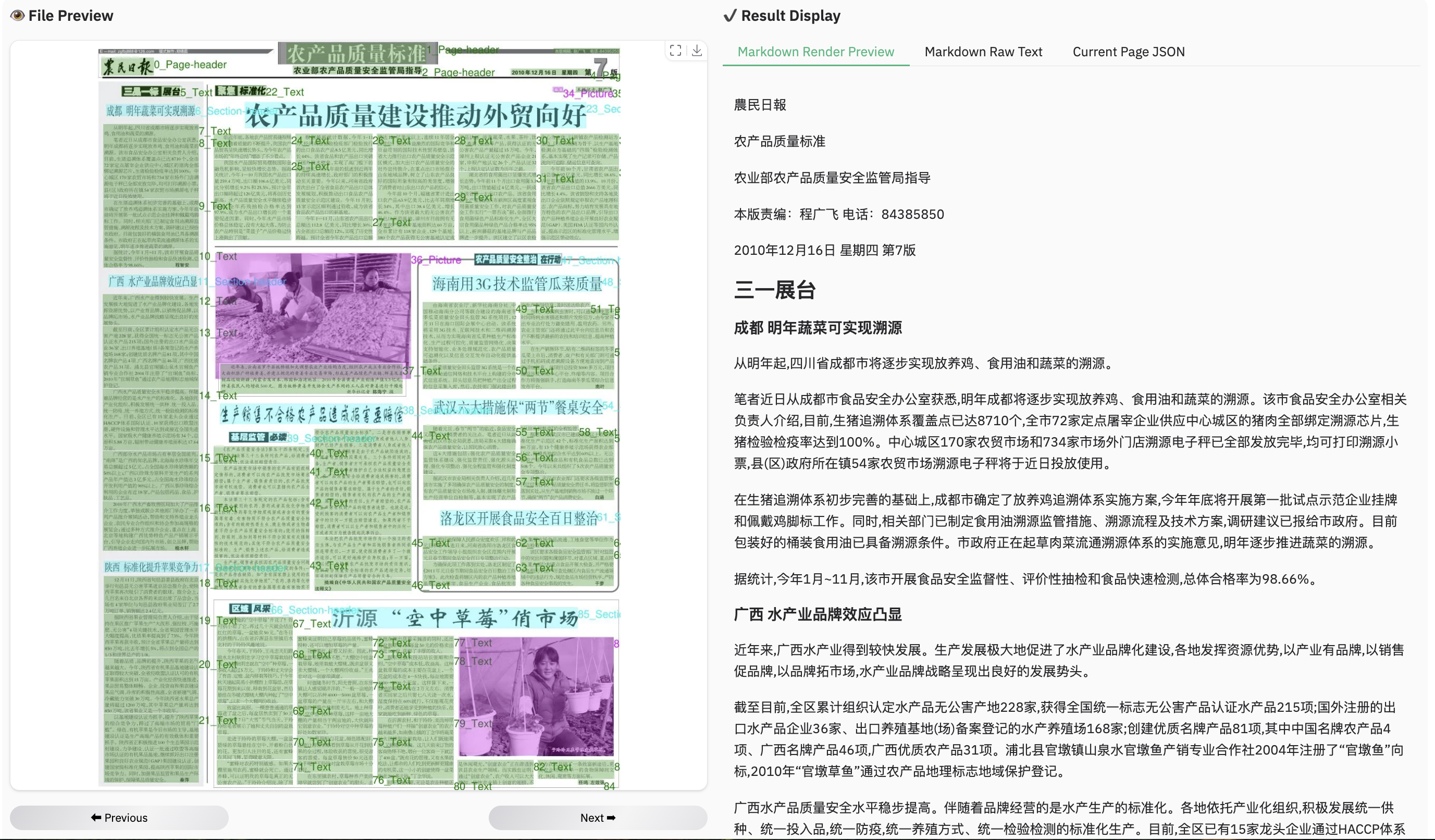

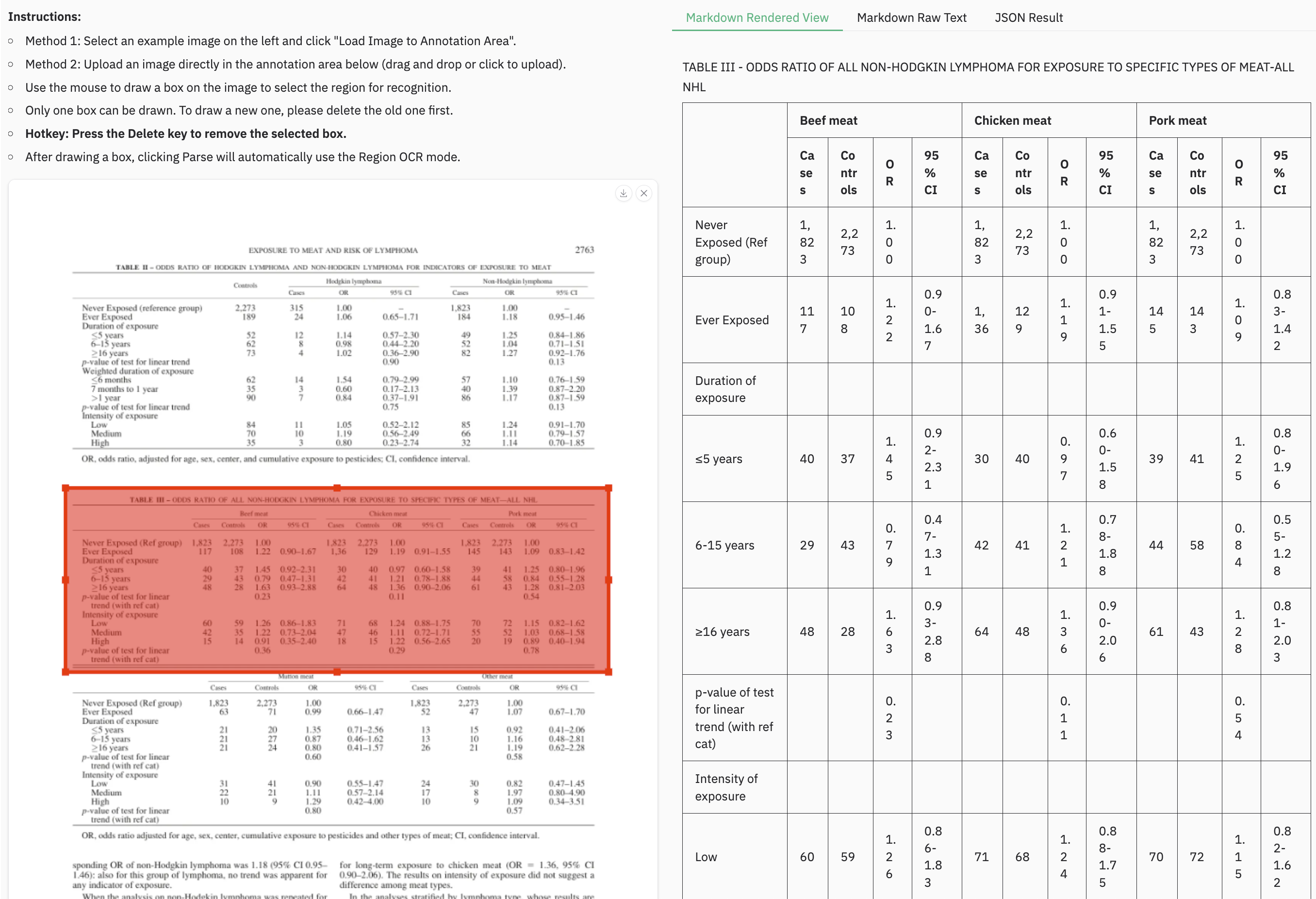

+### Example for table document

+

+

+### Example for table document

+ +

+ +

+ +

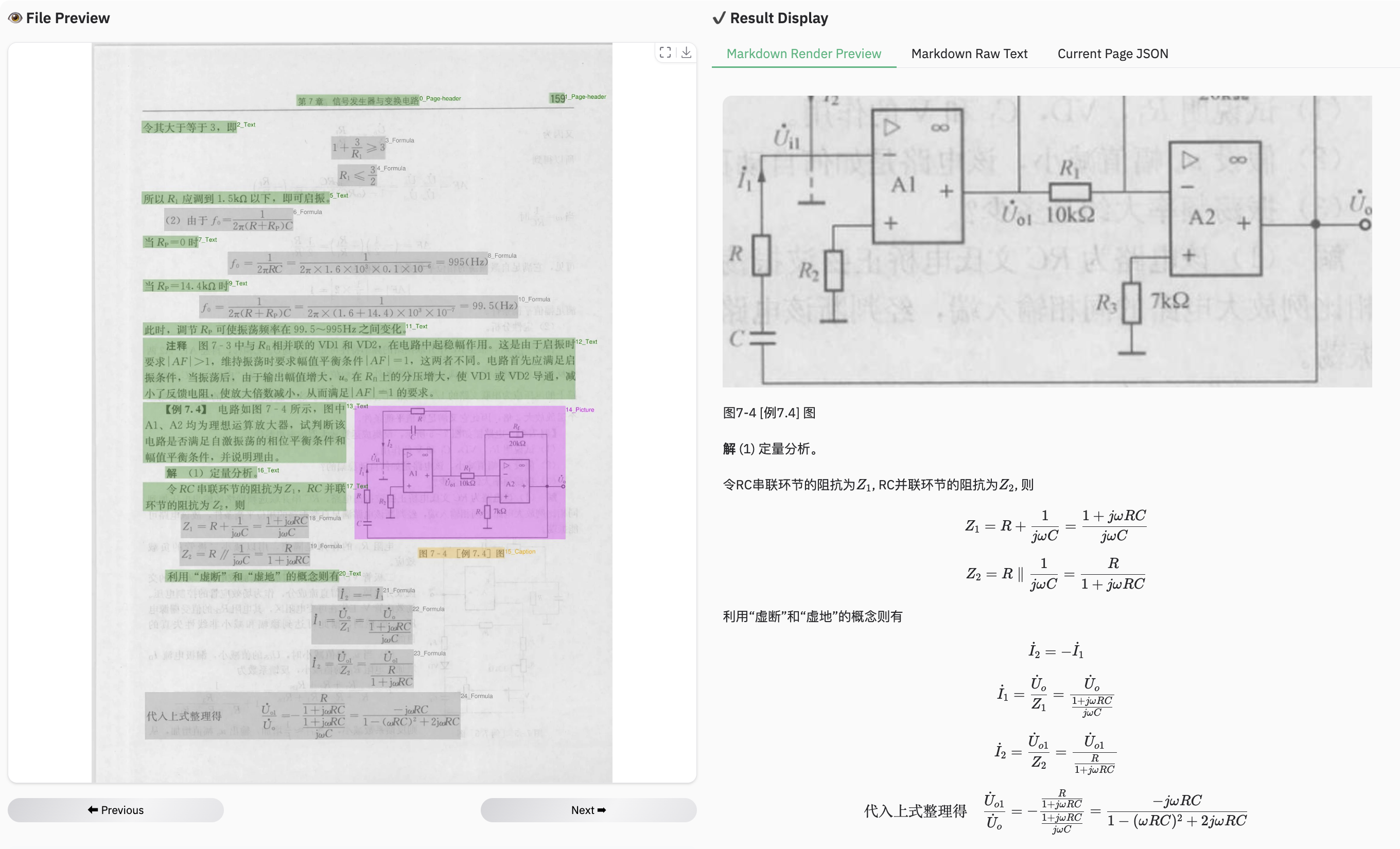

+### Example for multilingual document

+

+

+### Example for multilingual document

+ +

+ +

+ +

+ +

+ +

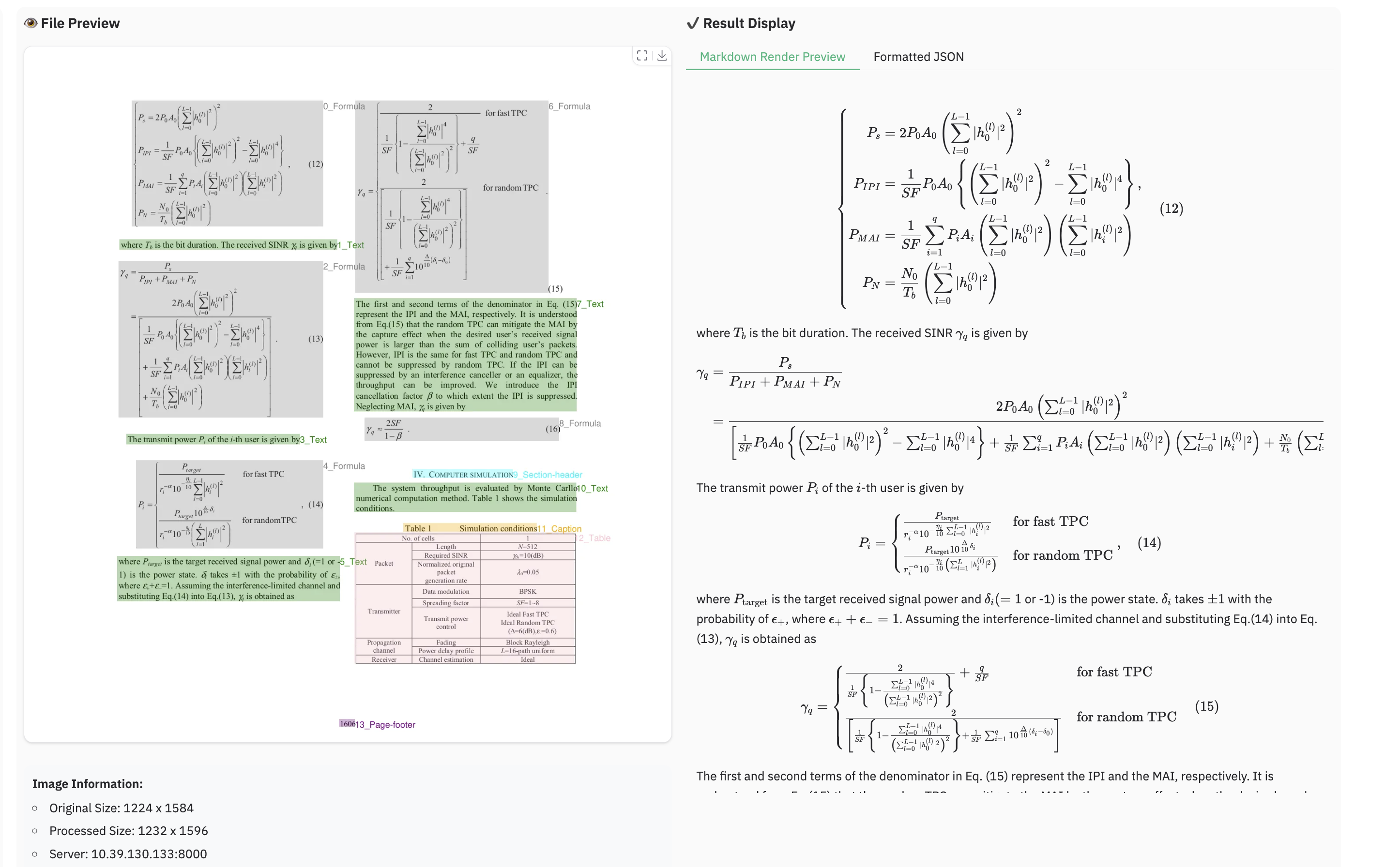

+### Example for reading order

+

+

+### Example for reading order

+ +

+### Example for grounding ocr

+

+

+### Example for grounding ocr

+ +

+

+## Acknowledgments

+We would like to thank [Qwen2.5-VL](https://github.com/QwenLM/Qwen2.5-VL), [aimv2](https://github.com/apple/ml-aim), [MonkeyOCR](https://github.com/Yuliang-Liu/MonkeyOCR),

+[OmniDocBench](https://github.com/opendatalab/OmniDocBench), [PyMuPDF](https://github.com/pymupdf/PyMuPDF), for providing code and models.

+

+We also thank [DocLayNet](https://github.com/DS4SD/DocLayNet), [M6Doc](https://github.com/HCIILAB/M6Doc), [CDLA](https://github.com/buptlihang/CDLA), [D4LA](https://github.com/AlibabaResearch/AdvancedLiterateMachinery) for providing valuable datasets.

+

+## Limitation & Future Work

+

+- **Complex Document Elements:**

+ - **Table&Formula**: dots.ocr is not yet perfect for high-complexity tables and formula extraction.

+ - **Picture**: Pictures in documents are currently not parsed.

+

+- **Parsing Failures:** The model may fail to parse under certain conditions:

+ - When the character-to-pixel ratio is excessively high. Try enlarging the image or increasing the PDF parsing DPI (a setting of 200 is recommended). However, please note that the model performs optimally on images with a resolution under 11289600 pixels.

+ - Continuous special characters, such as ellipses (`...`) and underscores (`_`), may cause the prediction output to repeat endlessly. In such scenarios, consider using alternative prompts like `prompt_layout_only_en`, `prompt_ocr`, or `prompt_grounding_ocr` ([details here](https://github.com/rednote-hilab/dots.ocr/blob/master/dots_ocr/utils/prompts.py)).

+

+- **Performance Bottleneck:** Despite its 1.7B parameter LLM foundation, **dots.ocr** is not yet optimized for high-throughput processing of large PDF volumes.

+

+We are committed to achieving more accurate table and formula parsing, as well as enhancing the model's OCR capabilities for broader generalization, all while aiming for **a more powerful, more efficient model**. Furthermore, we are actively considering the development of **a more general-purpose perception model** based on Vision-Language Models (VLMs), which would integrate general detection, image captioning, and OCR tasks into a unified framework. **Parsing the content of the pictures in the documents** is also a key priority for our future work.

+We believe that collaboration is the key to tackling these exciting challenges. If you are passionate about advancing the frontiers of document intelligence and are interested in contributing to these future endeavors, we would love to hear from you. Please reach out to us via email at: [yanqing4@xiaohongshu.com].

diff --git a/chat_template.json b/chat_template.json

new file mode 100644

index 0000000..87a662f

--- /dev/null

+++ b/chat_template.json

@@ -0,0 +1,3 @@

+{

+ "chat_template": "{% set image_count = namespace(value=0) %}{% set video_count = namespace(value=0) %}{%- for m in messages %}{%- if m.role == 'system' %}{{- '<|system|>' + m.content + '<|endofsystem|>\n' }}{%- elif m.role == 'user' %}{% if m.content is string %}{{- '<|user|>' + m.content + '<|endofuser|>' }}{% else %} {% for content in m.content %}{% if content['type'] == 'image' or 'image' in content or 'image_url' in content %}{% set image_count.value = image_count.value + 1 %}{% if add_vision_id %}Picture {{ image_count.value }}: {% endif %}<|img|><|imgpad|><|endofimg|>{% elif content['type'] == 'video' or 'video' in content %}{% set video_count.value = video_count.value + 1 %}{% if add_vision_id %}Video {{ video_count.value }}: {% endif %}<|img|><|video_pad|><|endofimg|>{% elif 'text' in content %}{{ content['text'] }}{% endif %}{% endfor %}{%- endif %}{%- elif m.role == 'assistant' %}{{- '<|assistant|>' + m.content }}{%- if not loop.last %}{{- '<|endofassistant|>' }}{%- endif %}{%- endif %}{%- endfor %}{%- if messages[-1].role != 'assistant' %}{{- '<|assistant|>' }}{%- endif %}"

+}

\ No newline at end of file

diff --git a/config.json b/config.json

new file mode 100644

index 0000000..7ea2c09

--- /dev/null

+++ b/config.json

@@ -0,0 +1,51 @@

+{

+ "architectures": [

+ "DotsOCRForCausalLM"

+ ],

+ "model_type": "dots_ocr",

+ "auto_map": {

+ "AutoConfig": "configuration_dots.DotsOCRConfig",

+ "AutoModelForCausalLM": "modeling_dots_ocr.DotsOCRForCausalLM"

+ },

+ "attention_bias": true,

+ "attention_dropout": 0.0,

+ "hidden_act": "silu",

+ "hidden_size": 1536,

+ "initializer_range": 0.02,

+ "intermediate_size": 8960,

+ "max_position_embeddings": 131072,

+ "max_window_layers": 28,

+ "num_attention_heads": 12,

+ "num_hidden_layers": 28,

+ "num_key_value_heads": 2,

+ "rms_norm_eps": 1e-06,

+ "rope_scaling": null,

+ "rope_theta": 1000000,

+ "sliding_window": 131072,

+ "tie_word_embeddings": false,

+ "torch_dtype": "bfloat16",

+ "transformers_version": "4.51.0",

+ "use_cache": true,

+ "use_sliding_window": false,

+ "vocab_size": 151936,

+ "image_token_id": 151665,

+ "video_token_id": 151656,

+ "vision_config": {

+ "embed_dim": 1536,

+ "hidden_size": 1536,

+ "intermediate_size": 4224,

+ "num_hidden_layers": 42,

+ "num_attention_heads": 12,

+ "num_channels": 3,

+ "patch_size": 14,

+ "post_norm": true,

+ "rms_norm_eps": 1e-05,

+ "spatial_merge_size": 2,

+ "temporal_patch_size": 1,

+ "use_bias": false,

+ "attn_implementation": "flash_attention_2",

+ "init_merger_std": 0.02,

+ "initializer_range": 0.02,

+ "is_causal": false

+ }

+}

\ No newline at end of file

diff --git a/configuration.json b/configuration.json

new file mode 100644

index 0000000..4aef15d

--- /dev/null

+++ b/configuration.json

@@ -0,0 +1 @@

+{"framework": "pytorch", "task": "image-text-to-text", "allow_remote": true}

\ No newline at end of file

diff --git a/configuration_dots.py b/configuration_dots.py

new file mode 100644

index 0000000..55901c2

--- /dev/null

+++ b/configuration_dots.py

@@ -0,0 +1,76 @@

+from typing import Any, Optional

+from transformers.configuration_utils import PretrainedConfig

+from transformers.models.qwen2 import Qwen2Config

+from transformers import Qwen2_5_VLProcessor, AutoProcessor

+from transformers.models.auto.configuration_auto import CONFIG_MAPPING

+

+

+class DotsVisionConfig(PretrainedConfig):

+ model_type: str = "dots_vit"

+

+ def __init__(

+ self,

+ embed_dim: int = 1536, # vision encoder embed size

+ hidden_size: int = 1536, # after merger hidden size

+ intermediate_size: int = 4224,

+ num_hidden_layers: int = 42,

+ num_attention_heads: int = 12,

+ num_channels: int = 3,

+ patch_size: int = 14,

+ spatial_merge_size: int = 2,

+ temporal_patch_size: int = 1,

+ rms_norm_eps: float = 1e-5,

+ use_bias: bool = False,

+ attn_implementation="flash_attention_2", # "eager","sdpa","flash_attention_2"

+ initializer_range=0.02,

+ init_merger_std=0.02,

+ is_causal=False, # ve causal forward

+ post_norm=True,

+ gradient_checkpointing=False,

+ **kwargs: Any,

+ ):

+ super().__init__(**kwargs)

+ self.embed_dim = embed_dim

+ self.hidden_size = hidden_size

+ self.intermediate_size = intermediate_size

+ self.num_hidden_layers = num_hidden_layers

+ self.num_attention_heads = num_attention_heads

+ self.num_channels = num_channels

+ self.patch_size = patch_size

+ self.spatial_merge_size = spatial_merge_size

+ self.temporal_patch_size = temporal_patch_size

+ self.rms_norm_eps = rms_norm_eps

+ self.use_bias = use_bias

+ self.attn_implementation = attn_implementation

+ self.initializer_range = initializer_range

+ self.init_merger_std = init_merger_std

+ self.is_causal = is_causal

+ self.post_norm = post_norm

+ self.gradient_checkpointing = gradient_checkpointing

+

+

+

+class DotsOCRConfig(Qwen2Config):

+ model_type = "dots_ocr"

+ def __init__(self,

+ image_token_id = 151665,

+ video_token_id = 151656,

+ vision_config: Optional[dict] = None, *args, **kwargs):

+ super().__init__(*args, **kwargs)

+ self.image_token_id = image_token_id

+ self.video_token_id = video_token_id

+ self.vision_config = DotsVisionConfig(**(vision_config or {}))

+

+ def save_pretrained(self, save_directory, **kwargs):

+ self._auto_class = None

+ super().save_pretrained(save_directory, **kwargs)

+

+

+class DotsVLProcessor(Qwen2_5_VLProcessor):

+ def __init__(self, image_processor=None, tokenizer=None, chat_template=None, **kwargs):

+ super().__init__(image_processor, tokenizer, chat_template=chat_template)

+ self.image_token = "<|imgpad|>" if not hasattr(tokenizer, "image_token") else tokenizer.image_token

+

+

+AutoProcessor.register("dots_ocr", DotsVLProcessor)

+CONFIG_MAPPING.register("dots_ocr", DotsOCRConfig)

diff --git a/generation_config.json b/generation_config.json

new file mode 100644

index 0000000..c6f6906

--- /dev/null

+++ b/generation_config.json

@@ -0,0 +1,7 @@

+{

+ "max_length": 32768,

+ "eos_token_id": [

+ 151643,

+ 151673

+ ]

+}

diff --git a/merges.txt b/merges.txt

new file mode 100644

index 0000000..7ce1d95

--- /dev/null

+++ b/merges.txt

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:599bab54075088774b1733fde865d5bd747cbcc7a547c5bc12610e874e26f5e3

+size 1671839

diff --git a/model-00001-of-00002.safetensors b/model-00001-of-00002.safetensors

new file mode 100644

index 0000000..6a5014f

--- /dev/null

+++ b/model-00001-of-00002.safetensors

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:686da8b6a33f88d4fc83092a1f601dbb12ca4e639942190771a61f5cc287aa24

+size 135

diff --git a/model-00002-of-00002.safetensors b/model-00002-of-00002.safetensors

new file mode 100644

index 0000000..09e3c4a

--- /dev/null

+++ b/model-00002-of-00002.safetensors

@@ -0,0 +1,3 @@

+version https://git-lfs.github.com/spec/v1

+oid sha256:8d00568ee09e48f30b2ebd2b2245f6fa07bb2e1afcc3c0300f8298d6a52abf49

+size 135

diff --git a/model.safetensors.index.json b/model.safetensors.index.json

new file mode 100644

index 0000000..8a62c07

--- /dev/null

+++ b/model.safetensors.index.json

@@ -0,0 +1,650 @@

+{

+ "metadata": {

+ "total_size": 6078358528

+ },

+ "weight_map": {

+ "lm_head.weight": "model-00001-of-00002.safetensors",

+ "model.embed_tokens.weight": "model-00001-of-00002.safetensors",

+ "model.layers.0.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.0.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.0.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.0.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.0.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.0.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.0.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.0.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.0.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.0.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.0.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.0.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.1.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.1.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.1.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.1.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.1.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.1.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.1.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.1.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.1.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.1.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.1.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.1.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.10.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.10.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.10.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.10.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.10.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.10.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.10.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.10.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.10.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.10.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.10.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.10.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.11.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.11.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.11.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.11.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.11.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.11.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.11.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.11.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.11.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.11.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.11.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.11.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.12.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.12.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.12.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.12.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.12.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.12.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.12.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.12.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.12.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.12.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.12.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.12.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.13.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.13.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.13.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.13.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.13.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.13.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.13.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.13.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.13.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.13.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.13.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.13.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.14.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.14.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.14.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.14.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.14.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.14.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.14.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.14.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.14.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.14.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.14.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.14.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.15.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.15.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.15.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.15.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.15.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.15.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.15.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.15.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.15.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.15.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.15.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.15.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.16.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.16.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.16.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.16.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.16.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.16.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.16.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.16.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.16.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.16.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.16.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.16.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.17.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.17.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.17.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.17.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.17.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.17.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.17.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.17.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.17.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.17.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.17.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.17.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.18.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.18.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.18.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.18.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.18.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.18.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.18.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.18.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.18.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.18.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.18.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.18.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.19.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.19.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.19.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.19.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.19.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.19.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.19.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.19.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.19.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.19.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.19.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.19.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.2.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.2.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.2.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.2.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.2.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.2.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.2.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.2.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.2.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.2.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.2.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.2.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.20.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.20.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.20.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.20.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.20.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.20.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.20.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.20.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.20.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.20.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.20.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.20.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.21.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.21.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.21.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.21.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.21.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.21.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.21.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.21.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.21.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.21.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.21.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.21.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.22.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.22.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.22.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.22.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.22.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.22.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.22.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.22.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.22.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.22.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.22.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.22.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.23.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.23.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.23.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.23.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.23.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.23.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.23.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.23.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.23.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.23.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.23.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.23.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.24.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.24.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.24.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.24.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.24.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.24.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.24.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.24.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.24.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.24.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.24.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.24.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.25.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.25.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.25.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.25.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.25.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.25.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.25.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.25.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.25.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.25.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.25.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.25.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.26.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.26.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.26.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.26.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.26.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.26.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.26.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.26.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.26.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.26.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.26.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.26.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.27.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.27.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.27.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.27.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.27.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.27.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.27.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.27.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.27.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.27.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.27.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.27.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.3.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.3.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.3.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.3.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.3.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.3.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.3.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.3.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.3.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.3.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.3.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.3.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.4.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.4.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.4.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.4.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.4.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.4.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.4.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.4.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.4.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.4.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.4.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.4.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.5.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.5.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.5.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.5.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.5.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.5.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.5.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.5.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.5.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.5.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.5.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.5.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.6.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.6.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.6.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.6.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.6.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.6.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.6.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.6.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.6.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.6.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.6.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.6.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.7.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.7.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.7.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.7.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.7.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.7.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.7.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.7.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.7.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.7.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.7.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.7.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.8.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.8.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.8.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.8.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.8.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.8.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.8.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.8.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.8.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.8.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.8.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.8.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.9.input_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.9.mlp.down_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.9.mlp.gate_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.9.mlp.up_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.9.post_attention_layernorm.weight": "model-00001-of-00002.safetensors",

+ "model.layers.9.self_attn.k_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.9.self_attn.k_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.9.self_attn.o_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.9.self_attn.q_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.9.self_attn.q_proj.weight": "model-00001-of-00002.safetensors",

+ "model.layers.9.self_attn.v_proj.bias": "model-00001-of-00002.safetensors",

+ "model.layers.9.self_attn.v_proj.weight": "model-00001-of-00002.safetensors",

+ "model.norm.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.0.attn.proj.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.0.attn.qkv.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.0.mlp.fc1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.0.mlp.fc2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.0.mlp.fc3.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.0.norm1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.0.norm2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.1.attn.proj.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.1.attn.qkv.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.1.mlp.fc1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.1.mlp.fc2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.1.mlp.fc3.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.1.norm1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.1.norm2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.10.attn.proj.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.10.attn.qkv.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.10.mlp.fc1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.10.mlp.fc2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.10.mlp.fc3.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.10.norm1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.10.norm2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.11.attn.proj.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.11.attn.qkv.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.11.mlp.fc1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.11.mlp.fc2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.11.mlp.fc3.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.11.norm1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.11.norm2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.12.attn.proj.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.12.attn.qkv.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.12.mlp.fc1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.12.mlp.fc2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.12.mlp.fc3.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.12.norm1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.12.norm2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.13.attn.proj.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.13.attn.qkv.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.13.mlp.fc1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.13.mlp.fc2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.13.mlp.fc3.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.13.norm1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.13.norm2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.14.attn.proj.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.14.attn.qkv.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.14.mlp.fc1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.14.mlp.fc2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.14.mlp.fc3.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.14.norm1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.14.norm2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.15.attn.proj.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.15.attn.qkv.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.15.mlp.fc1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.15.mlp.fc2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.15.mlp.fc3.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.15.norm1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.15.norm2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.16.attn.proj.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.16.attn.qkv.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.16.mlp.fc1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.16.mlp.fc2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.16.mlp.fc3.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.16.norm1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.16.norm2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.17.attn.proj.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.17.attn.qkv.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.17.mlp.fc1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.17.mlp.fc2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.17.mlp.fc3.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.17.norm1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.17.norm2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.18.attn.proj.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.18.attn.qkv.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.18.mlp.fc1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.18.mlp.fc2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.18.mlp.fc3.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.18.norm1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.18.norm2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.19.attn.proj.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.19.attn.qkv.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.19.mlp.fc1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.19.mlp.fc2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.19.mlp.fc3.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.19.norm1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.19.norm2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.2.attn.proj.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.2.attn.qkv.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.2.mlp.fc1.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.2.mlp.fc2.weight": "model-00001-of-00002.safetensors",

+ "vision_tower.blocks.2.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.2.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.2.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.20.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.20.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.20.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.20.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.20.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.20.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.20.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.21.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.21.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.21.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.21.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.21.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.21.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.21.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.22.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.22.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.22.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.22.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.22.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.22.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.22.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.23.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.23.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.23.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.23.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.23.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.23.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.23.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.24.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.24.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.24.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.24.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.24.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.24.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.24.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.25.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.25.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.25.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.25.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.25.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.25.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.25.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.26.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.26.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.26.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.26.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.26.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.26.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.26.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.27.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.27.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.27.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.27.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.27.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.27.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.27.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.28.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.28.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.28.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.28.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.28.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.28.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.28.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.29.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.29.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.29.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.29.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.29.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.29.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.29.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.3.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.3.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.3.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.3.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.3.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.3.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.3.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.30.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.30.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.30.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.30.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.30.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.30.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.30.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.31.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.31.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.31.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.31.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.31.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.31.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.31.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.32.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.32.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.32.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.32.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.32.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.32.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.32.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.33.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.33.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.33.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.33.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.33.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.33.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.33.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.34.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.34.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.34.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.34.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.34.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.34.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.34.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.35.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.35.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.35.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.35.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.35.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.35.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.35.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.36.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.36.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.36.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.36.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.36.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.36.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.36.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.37.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.37.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.37.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.37.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.37.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.37.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.37.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.38.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.38.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.38.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.38.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.38.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.38.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.38.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.39.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.39.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.39.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.39.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.39.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.39.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.39.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.4.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.4.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.4.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.4.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.4.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.4.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.4.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.40.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.40.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.40.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.40.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.40.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.40.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.40.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.41.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.41.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.41.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.41.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.41.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.41.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.41.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.5.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.5.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.5.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.5.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.5.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.5.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.5.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.6.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.6.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.6.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.6.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.6.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.6.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.6.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.7.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.7.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.7.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.7.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.7.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.7.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.7.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.8.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.8.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.8.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.8.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.8.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.8.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.8.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.9.attn.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.9.attn.qkv.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.9.mlp.fc1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.9.mlp.fc2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.9.mlp.fc3.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.9.norm1.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.blocks.9.norm2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.merger.ln_q.bias": "model-00002-of-00002.safetensors",

+ "vision_tower.merger.ln_q.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.merger.mlp.0.bias": "model-00002-of-00002.safetensors",

+ "vision_tower.merger.mlp.0.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.merger.mlp.2.bias": "model-00002-of-00002.safetensors",

+ "vision_tower.merger.mlp.2.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.patch_embed.patchifier.norm.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.patch_embed.patchifier.proj.bias": "model-00002-of-00002.safetensors",

+ "vision_tower.patch_embed.patchifier.proj.weight": "model-00002-of-00002.safetensors",

+ "vision_tower.post_trunk_norm.weight": "model-00002-of-00002.safetensors"

+ }

+}

\ No newline at end of file

diff --git a/modeling_dots_ocr.py b/modeling_dots_ocr.py

new file mode 100644

index 0000000..79d1c25

--- /dev/null

+++ b/modeling_dots_ocr.py

@@ -0,0 +1,131 @@

+from typing import List, Optional, Tuple, Union

+

+import torch

+from transformers.modeling_outputs import CausalLMOutputWithPast

+from transformers.models.qwen2 import Qwen2ForCausalLM

+

+from .configuration_dots import DotsVisionConfig, DotsOCRConfig

+from .modeling_dots_vision import DotsVisionTransformer

+

+

+DOTS_VLM_MAX_IMAGES = 200

+

+

+class DotsOCRForCausalLM(Qwen2ForCausalLM):

+ config_class = DotsOCRConfig

+

+ def __init__(self, config: DotsOCRConfig):

+ super().__init__(config)

+

+ if isinstance(self.config.vision_config, dict):

+ vision_config = DotsVisionConfig(**self.config.vision_config)

+ self.config.vision_config = vision_config

+ else:

+ vision_config = self.config.vision_config

+

+ self.vision_tower = DotsVisionTransformer(vision_config)

+

+ def prepare_inputs_embeds(

+ self,

+ input_ids: torch.LongTensor,

+ pixel_values: Optional[torch.FloatTensor] = None,

+ grid_thw: Optional[torch.FloatTensor] = None,

+ img_mask: Optional[torch.BoolTensor] = None,

+ ) -> torch.Tensor:

+ inputs_embeds = self.get_input_embeddings()(input_ids)

+

+ if pixel_values is not None:

+ assert img_mask is not None

+ if grid_thw.shape[0] > DOTS_VLM_MAX_IMAGES:

+ print(

+ f"Num image exceeded: {grid_thw.shape[0]} > {DOTS_VLM_MAX_IMAGES}, which may cause FSDP hang"

+ )

+

+ vision_embeddings = self.vision_tower(pixel_values, grid_thw)

+

+ true_indices = torch.nonzero(img_mask).squeeze()

+ if len(true_indices) > vision_embeddings.size(0):

+ print(

+ f"img_mask sum > VE and will be truncated, mask.sum()={len(true_indices)} {vision_embeddings.size(0)=}"

+ )

+ true_indices = true_indices[: vision_embeddings.size(0)]

+ new_img_mask = torch.zeros_like(img_mask, device=img_mask.device)

+ new_img_mask[true_indices[:, 0], true_indices[:, 1]] = True

+ else:

+ new_img_mask = img_mask

+

+ assert (

+ vision_embeddings.size(0) == new_img_mask.sum()

+ ), f"{vision_embeddings.size(0)=}, {new_img_mask.sum()=}"

+

+ inputs_embeds = inputs_embeds.masked_scatter(

+ new_img_mask.to(inputs_embeds.device).unsqueeze(-1).expand_as(inputs_embeds),

+ vision_embeddings.to(inputs_embeds.device).type(inputs_embeds.dtype),

+ )

+

+ return inputs_embeds

+

+ def forward(

+ self,

+ input_ids: torch.LongTensor,

+ pixel_values: Optional[torch.FloatTensor] = None,

+ image_grid_thw: Optional[torch.FloatTensor] = None,

+ inputs_embeds: Optional[torch.Tensor] = None,

+ attention_mask: Optional[torch.Tensor] = None,

+ position_ids: Optional[torch.LongTensor] = None,

+ past_key_values: Optional[List[torch.FloatTensor]] = None,

+ labels: Optional[torch.LongTensor] = None,

+ output_attentions: Optional[bool] = None,

+ output_hidden_states: Optional[bool] = None,

+ return_dict: Optional[bool] = None,

+ use_cache: Optional[bool] = None,

+ logits_to_keep: int = 0,

+ **loss_kwargs,

+ ) -> Union[Tuple, CausalLMOutputWithPast]:

+ return_dict = return_dict if return_dict is not None else self.config.use_return_dict

+ assert len(input_ids) >= 1, f"empty input_ids {input_ids.shape=} will cause gradnorm nan"

+ if inputs_embeds is None:

+ img_mask = input_ids == self.config.image_token_id

+ inputs_embeds = self.prepare_inputs_embeds(input_ids, pixel_values, image_grid_thw, img_mask)

+

+ outputs = super().forward(